Table of Contents

A few weeks ago, a prospect shared a story that gave us a front row seat to the entire North Korean IT worker fraud operations scheme.

A candidate had made it past the recruiter screen. Then past the hiring manager screen. Solid resume, credible work history, smooth answers. Nothing raised any red flags. They moved forward to a technical screen.

Then as the candidate was sharing their screen setting up their coding environment, they accidentally hit F3 (which expands all apps into a grid view). Because the interview was being recorded, when the team replayed the footage, they saw everything:

- DeepLiveCam running in the background - real-time deepfake software mapping a stolen LinkedIn photo onto someone else's face.

- AnyDesk open twice - remote desktop software letting someone else control the machine, a hallmark of North Korean IT worker schemes.

- OBS recording the session.

- Discord open to a coordination channel.

- A document labeled "Interview Response Assistance" feeding answers.

- A Google Drive file with another person's resume - the real person whose identity was being stolen.

- Even Google Maps with an address lookup to help answer "where are you based?" convincingly.

The person on the Zoom call wasn't the person on the resume. They weren't even in the same country. They were an IT worker fraud operator, wearing someone else's face, using someone else's credentials, moving through the process of a legitimate company.

What We Found When We Started Looking

That F3 incident made us curious: how often this is actually actually happening?

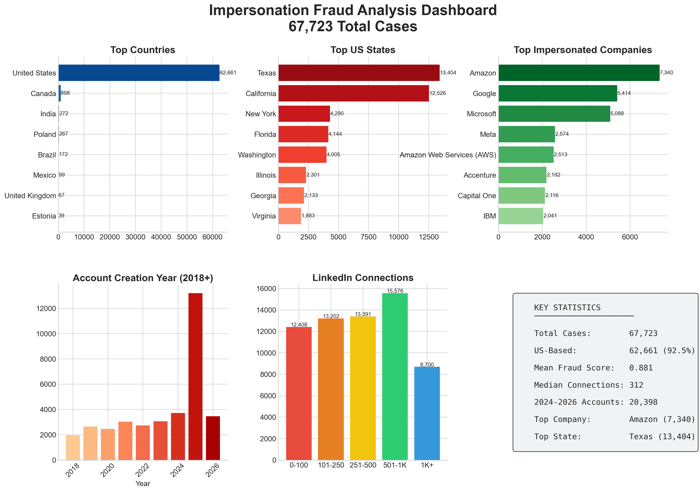

We pulled every case that our resume fraud detection tool flagged as a possible impersonation - situations where someone appeared to be applying under an identity that wasn't theirs. Since the beginning of 2026, we identified 67,000 cases.

Not 67,000 fake resumes. Not 67,000 fabricated credentials. 67,000 cases where a real person's identity was being used by someone else.

The Perfect Target

As we analyzed these cases, a clear profile emerged. Impersonators aren't targeting random LinkedIn accounts. They're selecting identities with specific characteristics:

No LinkedIn profile picture. This is the critical one. When there's no photo, you have no way to verify who you're talking to on a video call. The person on camera could be anyone - and without a reference image, you're forced to assume they are who they claim to be.

An aged LinkedIn account. These aren't freshly created profiles. The median account in our dataset has years of history. Impersonators specifically seek out established profiles because they hold up to scrutiny. A profile created in 2015 looks far more legitimate than one created last month.

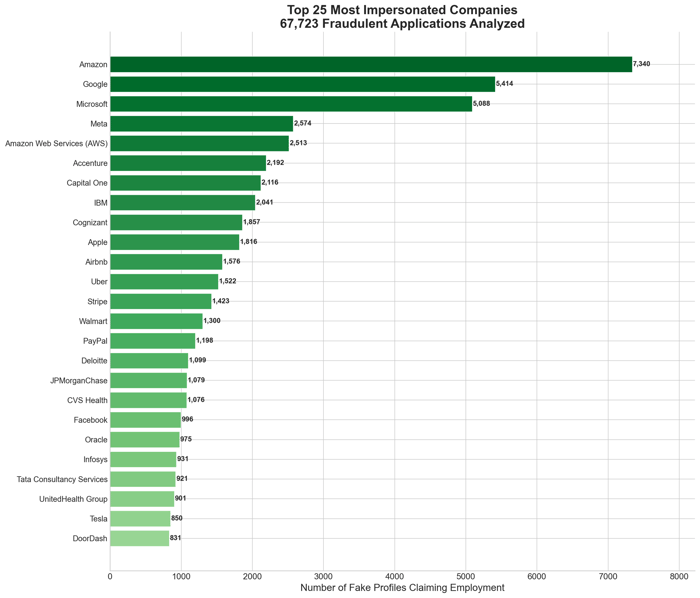

Impressive employers. Amazon, Google, Microsoft, Meta - the companies that carry the most weight on a resume. Over a quarter of all impersonation cases claim experience at just three companies: Amazon (10.8%), Google (8.0%), and Microsoft (7.5%).

A perfect resume. Clean formatting. Logical career progression. The right keywords. Everything a recruiter wants to see. That's the point - these are real professionals with real accomplishments. The resume isn't fabricated. It's stolen.

The Key Insight

Here's what makes IT worker fraud impersonation different from traditional resume fraud: these are real people.

The LinkedIn profile is real. The work history is real. The credentials are real. The person being impersonated actually worked or works at those companies, actually has those skills, actually built that career.

But the person applying for the job isn't them.

It's someone else using their name, their resume, their professional reputation, to get hired. The victim might be a software engineer in Seattle who has no idea their identity is being used. They only find out when a recruiter reaches out about a job they never applied for.

Some professionals have even added warnings to their LinkedIn bios: "I am being impersonated by others. Please verify my identity before proceeding."

That's not paranoia. That's the new reality.

The Scale of the Problem

The 67,000 cases we identified since January 1, 2026 reveal clear patterns:

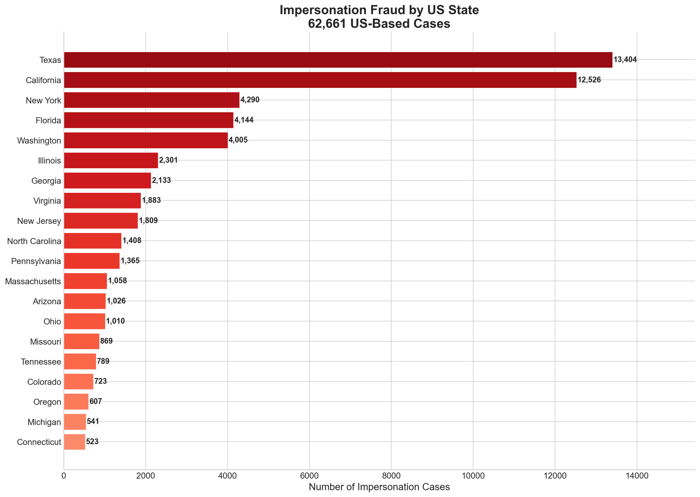

92.6% claim to be based in the United States. Of those US-based cases, Texas and California alone account for over 40% - with Texas leading at 21.5% and California close behind at 20%. These aren't random distributions - they correlate with where the most desirable remote-friendly employers are headquartered and where salary expectations are highest.

| State | Share of US Cases |

|---|---|

| Texas | 21.5% |

| California | 20.0% |

| New York | 6.8% |

| Florida | 6.7% |

| Washington | 6.3% |

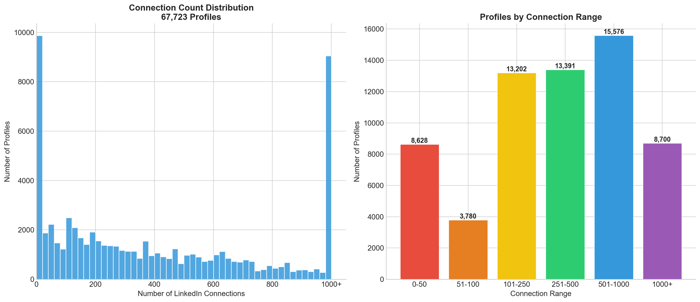

Over a third have 500+ LinkedIn connections. The median connection count is 311. Nearly 13% have over 1,000. These aren't bare-bones profiles - they're developed enough to pass any cursory LinkedIn check.

The top impersonated companies are exactly who you'd expect. Amazon leads at 10.8% of all cases. Google follows at 8.0%. Microsoft at 7.5%. These are the companies whose names carry the most weight - and IT worker fraud operators know it.

| Company | Share of Cases |

|---|---|

| Amazon | 10.8% |

| 8.0% | |

| Microsoft | 7.5% |

| Meta | 3.8% |

| AWS | 3.7% |

| Accenture | 3.3% |

| Capital One | 3.1% |

| IBM | 3.0% |

How the Operation Works

The F3 screenshot revealed the full infrastructure behind a modern impersonation operation:

Deepfake Layer: Real-time face-swapping software maps a photo onto the operator's face during video calls. If the target profile has a photo, they use it. If not, they don't need one - no one can verify what the real person looks like anyway.

Remote Access Layer: Tools like AnyDesk let a second person - often someone with genuine technical skills - control the machine during coding assessments. The "candidate" on camera is just the face. Someone else is doing the work.

Coordination Layer: Discord channels and similar tools let teams coordinate in real-time. One person handles the video presence, another handles technical questions, another feeds talking points.

Research Layer: The victim's resume, LinkedIn profile, and public information are pulled into documents for quick reference. Address lookups help the operator answer location questions convincingly.

This is why surface-level verification doesn't work anymore. You're not interviewing a person - you're interviewing a production.

What to Watch For

Based on our analysis of 67,000 cases, here are the signals that most often indicate impersonation:

No LinkedIn profile picture. This is the most reliable indicator. When there's no photo, fraudsters don't need deepfake technology - they can simply show up as anyone. The person on the call could be anyone, and you have no way to know.

Contact information that doesn't add up. The email or phone number submitted on the application may not have the history or footprint you'd expect from someone with this candidate's seniority and career trajectory. Legitimate professionals leave trails. Fraudulent contact details often don't.

Camera or lighting oddities. Deepfakes still struggle with certain conditions. Watch for faces that seem slightly "off" when the candidate turns their head, lighting that doesn't quite match the background, or video quality that seems inconsistent.

High-prestige credentials with thin details. Claims of senior roles at Amazon, Google, or Microsoft with vague answers about specific projects, teams, or managers. The real person could speak concretely about their work. The impersonator can't.

The identity checks out but the person doesn't feel right. This is the hardest one to articulate. The LinkedIn is legitimate. The resume is credible. But something about the interaction feels off. Trust that instinct. The profile being real doesn't mean the application or the person on the call is.

How Tofu Catches Impersonation

Traditional fraud detection focuses on the resume and contact details: Are the credentials real? Did this person actually work at Google? What is the email age? Phone carrier?

But with IT worker fraud impersonation, the credentials are real. The resume is accurate. The LinkedIn checks out. Everything looks legitimate - because it belongs to a legitimate person.

That's why we built Tofu to answer a different question: Is the person applying actually the person on the resume?

We do this by analyzing the connection between the applicant and their claimed identity.

For impersonators, that connection doesn't exist. They're using the victim's name and credentials, but the rest of the details are generated for the purposes of IT worker fraud or purchased on a dark web, and that on its own leaves signals.

The F3 incident proved the value of this approach. The customer had already received our flag on that candidate. The interview happened anyway - but the tag was there. The F3 just confirmed what the data had already suggested.

What This Means for Your Hiring Process

Video interviews are no longer proof of identity. Deepfakes have crossed the threshold from "detectable if you look closely" to "good enough to fool experienced interviewers." You cannot rely on video presence as verification.

Surface-level LinkedIn verification is no longer sufficient. A profile with hundreds of connections, a credible work history, and years of activity can still be an impersonation target. The sophistication bar has risen dramatically.

The earlier you verify, the less time you waste. Once you're in a final-round interview with someone where it turns out to be IT worker fraud, you've already lost hours of recruiter and interviewer time. Moving verification earlier in the funnel - before significant time investment - is increasingly important.

Your candidates may not know they're being impersonated. The person whose identity is being stolen often has no idea. When something feels wrong, consider reaching out through verified channels to confirm. The person you're interviewing may be a fraudster - but the person whose identity they stole might be a real professional who deserves a heads-up.

The Bigger Picture

The 67,000 cases in this analysis represent what we've identified since the beginning of 2026. The true number across the industry is definitely 100x higher. IT worker fraudsters are investing in better infrastructure, more convincing techniques, and more careful target selection. The operations we're seeing today are more sophisticated than anything we saw even a year ago.

The combination of remote hiring, accessible deepfake technology, and exposed professional identities has created the perfect environment for impersonation to thrive. The question isn't whether this is happening in your pipeline - it's whether you're catching it.

If you're seeing suspicious patterns in your applicant flow, or if you want to understand how IT worker fraud impersonation might be affecting your hiring, we're happy to share what we're learning.

And if an applicant ever accidentally hits F3 during an interview, pay attention to what you see.

FAQs

What is impersonation fraud in recruiting?

Impersonation fraud occurs when a job applicant claims to be someone else - typically a real professional with legitimate credentials. The fraudster uses the victim's name, work history, and professional reputation to pass screening and land a job. The LinkedIn profile and resume are real; the person applying is not.

How common is impersonation fraud?

We identified 67,000 cases since the beginning of 2026. The problem is accelerating as remote hiring becomes the norm, deepfake technology becomes more accessible, and professional identities become more exposed online.

Can deepfakes really fool interviewers on video calls?

Yes. Real-time face-swapping software has crossed the usability threshold. Tools like DeepLiveCam can map a static photo onto a live video feed convincingly enough to fool most interviewers, especially over compressed video connections.

Which companies are most commonly impersonated?

Amazon, Google, and Microsoft together account for over 26% of all impersonation attempts. Large, prestigious employers are the most frequent targets because their names carry the most weight on a resume.

What are the warning signs of impersonation fraud?

One of the biggest indicators is no LinkedIn profile picture - it eliminates visual verification entirely. Other signals include contact information with little digital footprint, answers that seem rehearsed or delayed, video quality inconsistencies, and vague answers about specific work despite impressive credentials.

How is impersonation fraud different from resume fraud?

Traditional resume fraud involves fabricating or exaggerating credentials or creating false identities. Impersonation fraud is different - the credentials are real, but the person claiming them is not. The LinkedIn profile, work history, and resume all belong to a legitimate professional whose identity has been stolen.

What should I do if I suspect my identity is being impersonated?

Consider adding a note to your LinkedIn profile alerting recruiters to verify your identity through direct channels. Monitor inbound recruiter messages for roles you didn't apply to. If you receive suspicious contact, document it and consider reaching out to the company's HR or security team.